Ru Peng (Perry)

"Only love endures the passage of time"

I’m a 4th-year PhD student at Computer Science Department of Zhejiang University (ZJU), advised by Professors Junbo Zhao and Gang Chen, and affiliated with DiLab-ZJU and State Key Laboratory of Blockchain and Data Security. Also, I was a research intern at Alibaba Qwen Team, working with Dayiheng Liu, Chang Zhou and Junyang Lin on data management and synthesis for QWEN series models. Previously, I was fortunate to collaborate with Professors Tianyong Hao, Yi Fang and Kehai Chen, who ushered me into the research journey.

My research spans multiple AI areas, including LLMs (current emphasis), machine learning, NLP, and multimodality, listed below in reverse chronological order.

- LLM Agents & RL: currently focusing on Agents and Reinforcement Learning;

- LLM Data: previously worked on pre-training data management and data synthesis;

- Unsupervised Model Evaluation: developing contrastive, energy-based unsupervised model evaluation on varied environments;

- Machine Translation: including multimodal, sign language, text-only machine translation.

I am open to opportunities across academia and industry — feel free to get in touch!

🔥 News

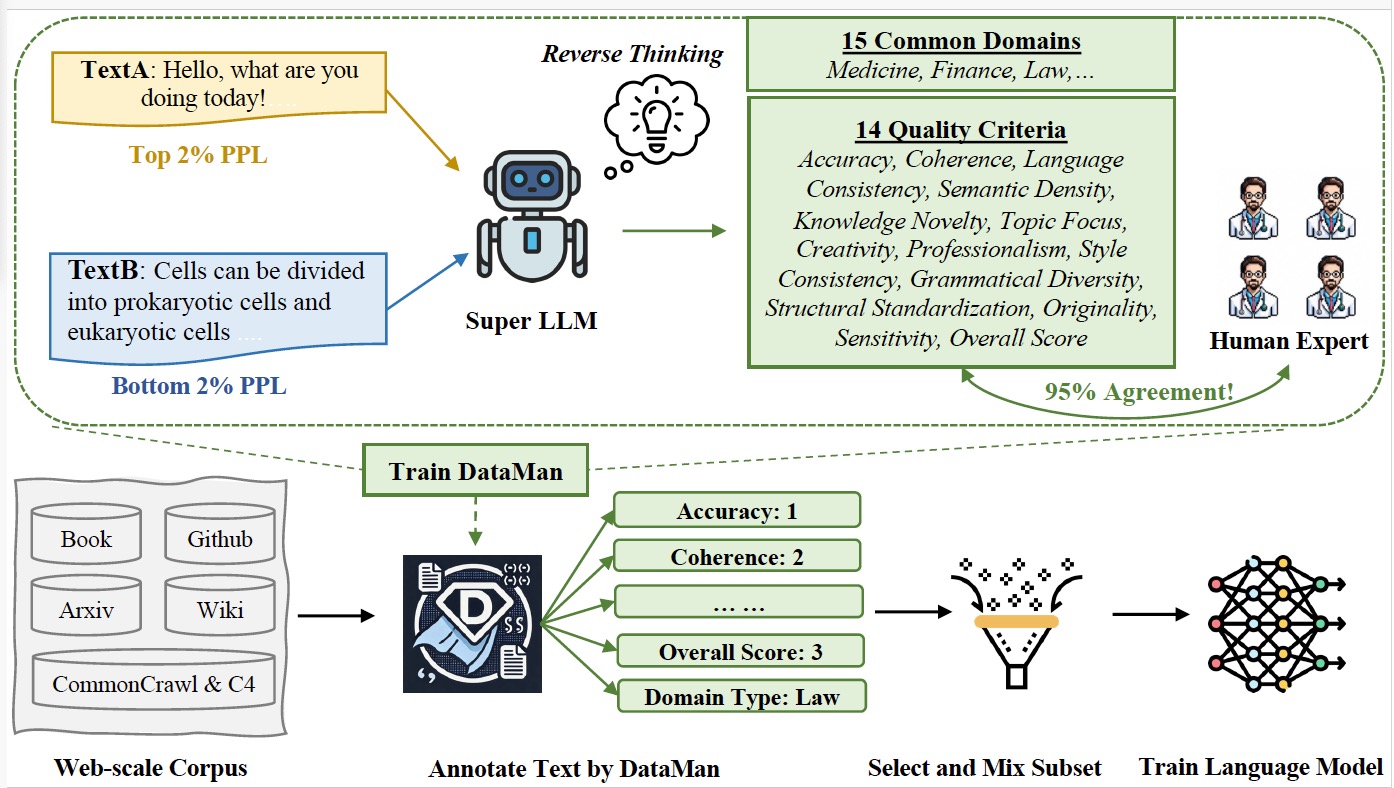

| Apr 15, 2026 | Our paper “[DataXman: Selecting and Mixing Pretraining Data via Bilingual Mixture-of-Experts Data Manager]” is accepted at SCIENCE CHINA Information Sciences 2026! |

|---|---|

| Apr 07, 2026 | Our paper “[HSS-Synth: Humanities and Social Sciences Data Synthesis for LLMs]” is accepted at ACL Findings 2026! |

| Mar 02, 2026 | Our paper “W2S: Weak-to-Strong Prompt Correction for Large Language Models” is accepted at Machine Learning 2026! |

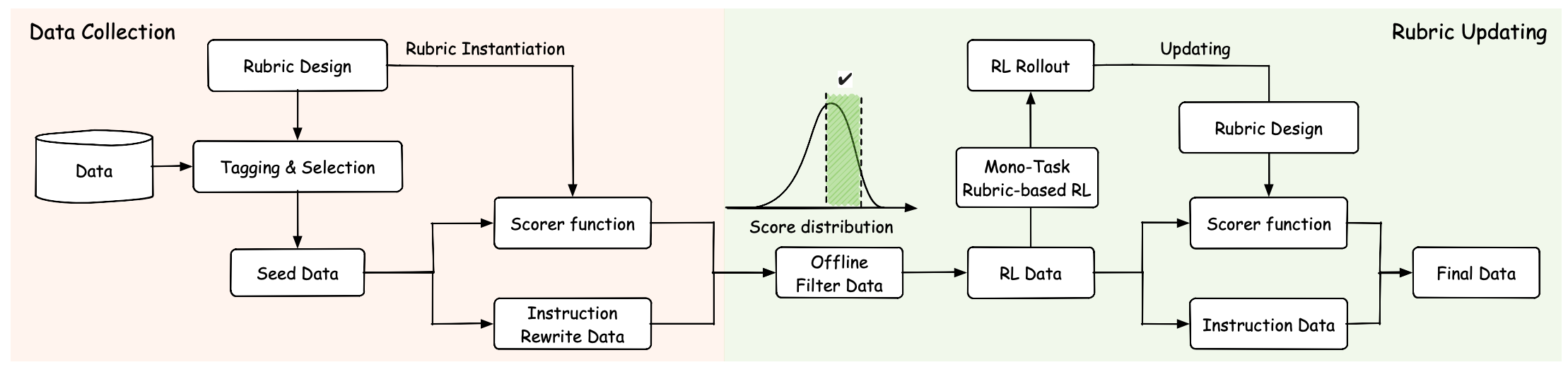

| Jan 26, 2026 | Our paper “OptimSyn: Influence-Guided Rubrics Optimization for Synthetic Data Generation” is accepted at ICLR 2026! |

| Aug 18, 2025 | Ant RL technical report “Reinforcement learning with rubric anchors“(extending RLVR with 10k+ Rubric rewards) is now released. |

| Feb 11, 2025 | Our paper “LLM-Enhanced Query Generation and Retrieval Preservation for Task-Oriented Dialogue” is accepted at Findings of ACL 2025! |

| Feb 11, 2025 | Our paper “DataMan: Data Manager for Pre-training Large Language Models” is accepted at ICLR 2025! |

| Dec 19, 2024 | Qwen2.5 technical report are released now. |

| Sep 20, 2024 | One paper “Inference-Time Decontamination: Reusing Leaked Benchmarks for Large Language Model Evaluation” is accepted at Findings of EMNLP 2024 and two paper “Predicting Rewards Alongside Tokens: Non-disruptive Parameter Insertion for Efficient Inference Intervention in Large Language Model”, “Embedding and Gradient Say Wrong: A White-Box Method for Hallucination Detection” are accepted at EMNLP 2024! |

| Sep 19, 2024 | Qwen2.5 series foundation models are released now. |

| Jul 15, 2024 | Qwen2 technical report are released now. |

| Jul 04, 2024 | Release the paper of “Dotamath” for mathematical reasoning. |

| Jun 17, 2024 | Qwen2 series foundation models are released now. |

| May 16, 2024 | Our paper “DORY: Deliberative Prompt Recovery for LLM” is accepted at Findings of ACL 2024! |

| Feb 04, 2024 | Qwen1.5 series foundation models are released now. |

| Jan 16, 2024 | Our paper “Energy-based Automated Model Evaluation” is accepted at ICLR 2024! |

| Oct 23, 2023 | I started my internship at Alibaba Qwen Team! Ping me if you want to meet up in HangZhou :) |

| Jul 15, 2023 | Our paper “CAME: Contrastive Automated Model Evaluation” is accepted at ICCV 2023! |

| Oct 06, 2022 | Our paper “Distill The Image to Nowhere: Inversion Knowledge Distillation for Multimodal Machine Translation” is accepted at EMNLP 2022 (Oral)! |

| Sep 10, 2022 | Started my PhD’s degree at College of Computer Science and Technology of Zhejiang University! |

| Apr 06, 2022 | Our paper “HybridVocab: Towards Multi-Modal Machine Translation via Multi-Aspect Alignment” is accpeted at ICMR 2022 (Oral)! |

📝 Selected Publications

💼 Work Experience

Qingyun Program Research Intern on Large Language Models

Focused on agentic mid-training and RL.

Plan-A Research Intern on Large Language Models

Mentor: Junbo ZhaoContributed to the reinforcement learning from rubric rewards for both closed-ended and open-ended task.

Research Intern on Large Language Models

Mentor: Dayiheng Liu, Junyang Lin and Chang Zhou- Contributed to the Qwen 1.5/2/2.5 series base models.

- Developed Data Manager (DataMan and DataXMan) for data selection and mixing in LLM pre-training, adopted in the Qwen base models and reported in Synced (机器之心).

- Contributed to data synthesis in open-ended tasks for the Qwen series models.

📚 Academic Services

- Conference Reviewer: ICLR 2024, 2025; ICML 2023, 2025; NeurIPS 2022, 2023, 2024; CVPR 2025; ICCV 2023, 2025; ECCV 2024; ACL 2024, 2026; AISTATS 2025; COLM 2024.

- Journal Reviewer: IEEE Transactions on Big Data (TBD), Transactions of Machine Learning Research (TMLR).

- Publication Chair: International Conference on Natural Language Processing (ICNLP) 2025.

😊 Miscellaneous

I love music 🎵, sports (basketball 🏀, football ⚽, running 🏃♂️, etc.), traveling the world 🗺️, hanging out with friends 🍻, and trying anything new. Click on Totoro below ↘️ to hear one of my favorite songs: 失恋ソング沢山聴いて泣いてばかりの私はもう。